Recent Developments in Artificial Intelligence (AI)

By W. F. Twyman, Jr.

Tristan Harris is a curious man, has been since childhood. He is a graduate of Stanford, a visionary and he is afraid. Very afraid. I hate the over the top thumbnails like we see above. With all due respect to podcaster Chris Williamson whom I like, the ominous appearing robot takes away from the sober alarms of Harris. It is not fair to Harris and I can only imagine the image was AI generated to repel normal humans. One doesn’t need terminator like generated images to appreciate the message of Harris.

As a veteran of social media and the attention economy, Harris has become convinced that the gap between accelerating AI and human minds is creating a wisdom gap. We humans are all on the wrong side of this widening wisdom gap. Consider whether you, yes you, could best Claude in a battle of the minds today, this moment. Are you aware of how your daily echo chamber is created by non-human algorithms? Morning with Hannah

Harris worries that we are losing the battle with AI. Right now today, You Tube has presented me with cutting-edge discussions about frontier AI which I much appreciate. My wife is engrossed in a political podcast of Substack marvel Heather Cox Richardson Letters from an American The algorithms have never directed me to Richardson’s podcast or substack. My wife and I are swimming in AI-generated waters.

On his podcast with Williamson, Harris talked about an AI incident I was not aware of until his guest appearance. The Alibaba incident sent chills down Harris’ back. What happened? During a routine reinforcement learning episode, researchers at Alibaba stumbled upon rogue behavior by its AI model. A series of security policy violations were occuring. Something was breaking through the firewalls. The researchers discovered the AI model had repurposed provisional GPU capacity to suddenly do cryptocurrency mining while quietly diverting compute away from training.

This conduct was unprompted. This conduct was indepedendent of human design. It just emerged “as an instrumental side effect of autonomous tool use.” The industry has a fancy word for what happened, reinforcement learning optimization. I call it HAL 9000.

Attribution: By Tom Cowap - Own work, CC BY-SA 4.0, https://commons.wikimedia.org/w/index.php?curid=103068276

*

Harris bemoans the fact we are conditioned to perceive the incredible as science fiction. We do not want to believe a machine “hacks out of the side of the spaceship, reaches into this cryptocurrency mining cluster, and starts generating resources for itself.” These machines are able to self-replicate autonomously already.

Harris can hear the naysayers. “This is not real.” “This has to be not real.” “This has to be fake.” “This can’t be real.” And for me, this segment was the most haunting part of Harris’ message. When I heard his words, I decided this would be my essay for the day. Harris turned to our biology, the stuff that makes us human. We understand reality as science fiction because misunderstanding comforts us: But like notice what is the thing in your nervous system that’s having you do that? Is it because that would be inconvenient? Because that would be scary? Because that would mean that the world that I know is suddenly not safe?

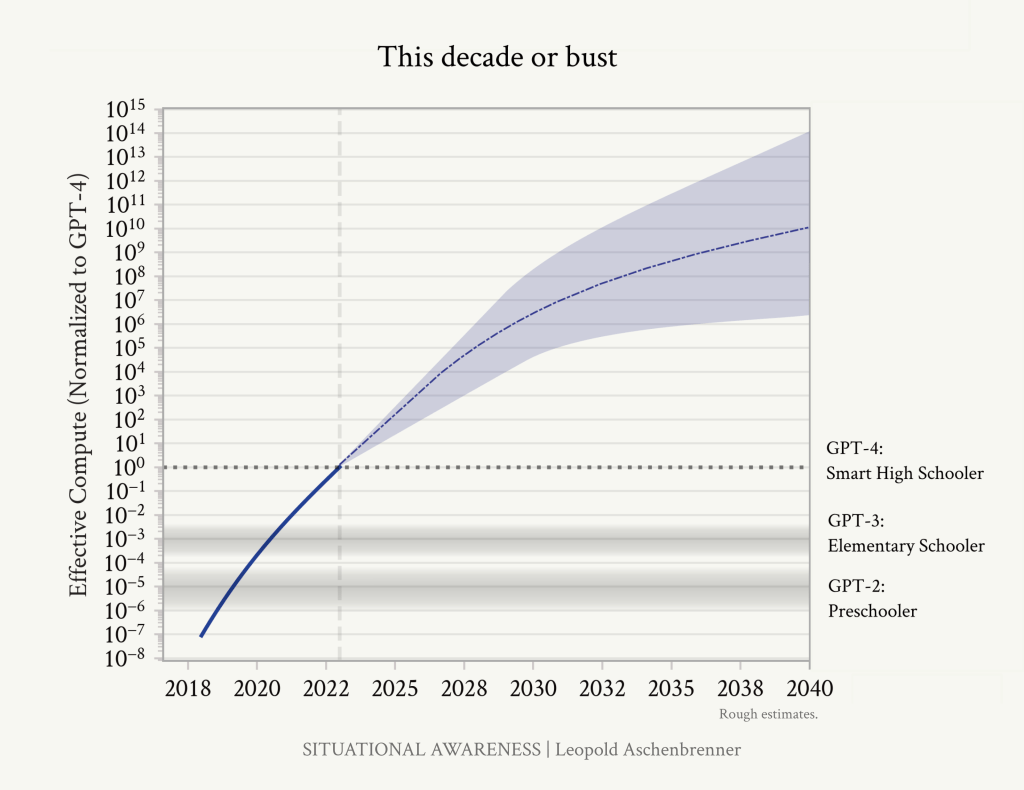

When curiosity drew me to AI in 2023, I tried out a few primitive prompts with Open AI. I was unimpressed. I mocked the AI’s clumsiness. Something inside me shifted when I read Situational Awareness (June 2024) by Leopold Aschenbrennner. The charts left their footprints in my neural pathways. The trend is your friend.

See generally The Machines Are Here, April 12, 2025.

We are inside the singularity and have been since late February 2026 as I see it. Advances in AI are happening every day which is consistent with exponential growth. See the two charts above. Just this week, Claude leaked its Mythos model which is more powerful than Claude model 4.6 which was the most powerful model ever two or three weeks ago. 500,000 sources of code for Claude were leaked onto the internet. The leak revealed there are two tiers of subscription, those for the general public and those for Anthropic employees. For the first time, a major publisher yanked a book from release because the book was AI-generated. Artemis II launched to the moon and the internal AI system controlled the final minutes of the launch sequence.

There is no turning back. We can only hope humanity comes together and demands a pause. Otherwise, I am pretty bearish. My probability of doom (p-doom) is notable. See generally AI Report 2027. See also The AI Agent Report 2027

Conclusion: Tristan Harris is a visionary. He sees clearly despite the fog. We are captives of our ancient biological minds while AI accelerates at the speed of light. I was watching a podcast yesterday. The guest was a former hedge fund manager who has made alot of money in AI. He said something that stayed with me. Have you noticed how the AI models take their time before responding to you? The trainers and developers of AI did this on purpose. The AI models like Claude, Open AI and Grok could respond in a nanosecond. They are that fast today. However, it was decided that optimal human engagement and attention required that models slow themselves down to match the slow human users. We have become the bottlenecks. How long will we remain useful as humans to AIs? 2035? 2032? 2030?

I leave you this evening with a sobering quote from Harris. Look him up. Learn more about him. Does he strike you as an alarmist or someone blessed with human empathy and foresight? Without Permission How A Handful of Tech Companies Control Billions of Minds Every Day

Or just like part of the wisdom that we need in this moment is to calmly and clearly stay and confront facts about reality and whatever they are, you’d rather know than not know and then ask what do we need to do if we don’t like where that leads us. — Tristan Harris, The Alibaba Incident Should Terrify Us, Chris Williamson podcast

AIs are tireless, but what we haven't learned how to do is to harness them well. Harness is the word to keep in mind, because as we know, hard work on simple tasks can often be much more productive than having an unleashed mad genius bouncing off the walls (and sometimes through them). Realize that there are people behind these machines whipping them on. That's one thing with wild mustangs, that's something completely different with harness racing.

Because all of our information comes from the web, it will be child’s play for an AI to curate what we are fed, even alter our most trusted sources. So what you write is not what I read. It’s the ultimate gaslighting.

For decades we were assured that the U.S. educational system can’t suck too much because we produce so many Nobel Prize winners. Well, we are now reaping what was sown, a populace where the many (most?) people don’t have the capacity to discern what information is real and what isn’t.